Analyzing AWS VPC Flow Logs via CloudWatch Logs Insight and Athena

This blog post explains how we can leverage CloudWatch Logs Insight and Athena to analyze AWS VPC Flow logs in real time.

AWS Flow Logs

Flow logs can be enabled in three (03) levels in AWS.

- VPC level

- Subnet level

- ENI level

For the purpose of this blog we will only focus on VPC Flow logs.

AWS VPC Flow Logs

AWS VPC Flow logs can track the following information related to VPC traffic.

- Source/Destination IP Address

- Source/Destination Port

- Protocol

- Bytes

- ALLOW/ REJECT Status

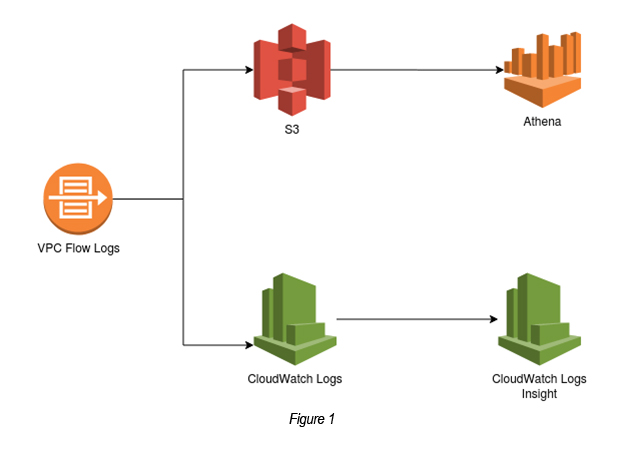

You can send VPC Flow log outputs to two main destinations.

- S3 Buckets

- CloudWatch Logs

These logs can then be forwarded to either CloudWatch Logs Insight or Athena to query them interactively (See Figure 1).

So let’s try the above (figure 1) step by step now.

Steps

Step 01: Create a Custom VPC

Create a Custom VPC with a public subnet, if you do not have one already. Create an EC2 instance (t2.micro) and attach it to the public subnet.

(P.Note: You may use either a Default / Custom VPC here. But it is recommended to use a Custom VPC in production setups. So let’s stick to best practices.)

Step 02: Create a VPC Flow Log (Destination = CloudWatch Logs)

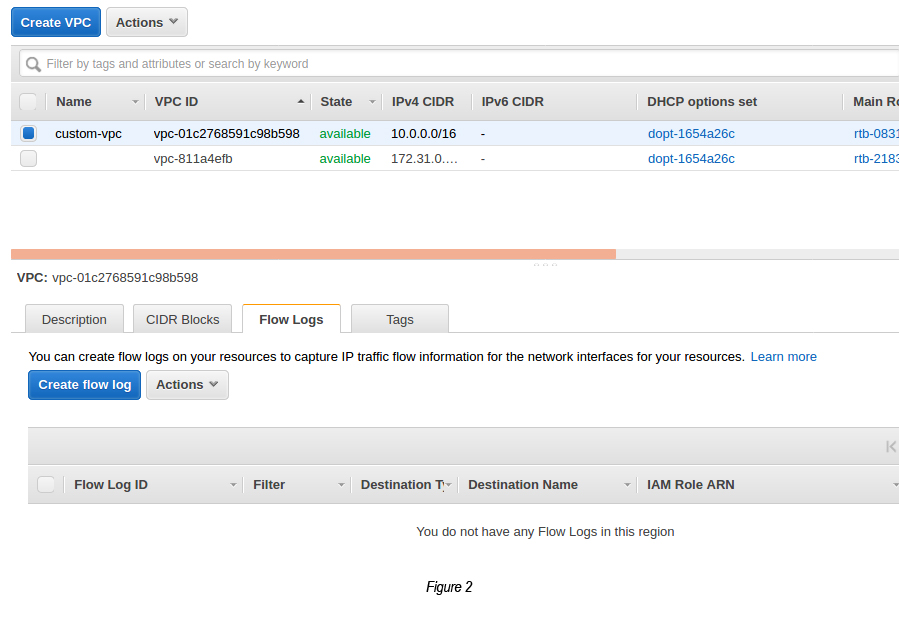

Select the Custom VPC that you’ve created and click the Flow Logs tab (See Figure 02).

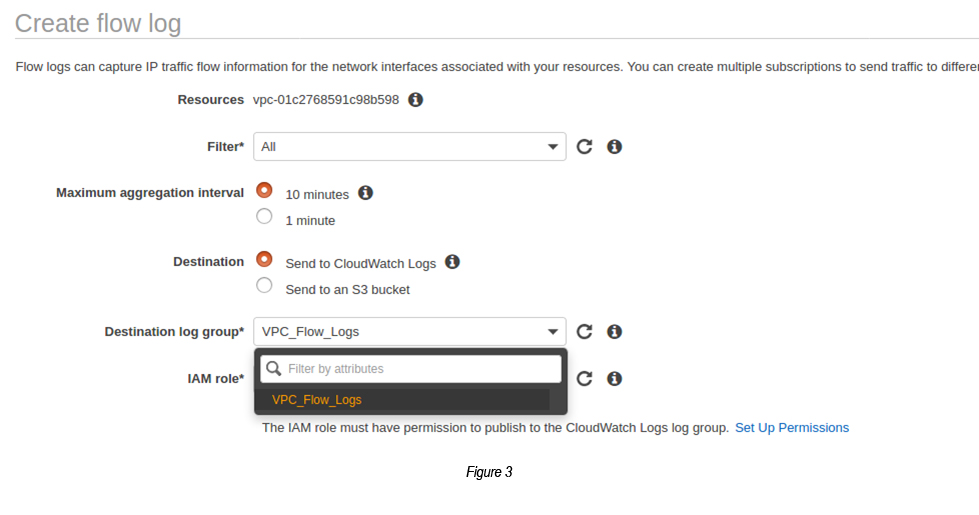

Now, click the Create flow log button and select the following:

Filter: All

Max Aggregation Interval: 10 minutes

Destination: Send to CloudWatch Logs

Destination Log Group: <Here you need to select a Log Group under CloudWatch. If you do not have one created, please do create it especially for vpc_flow_logs>

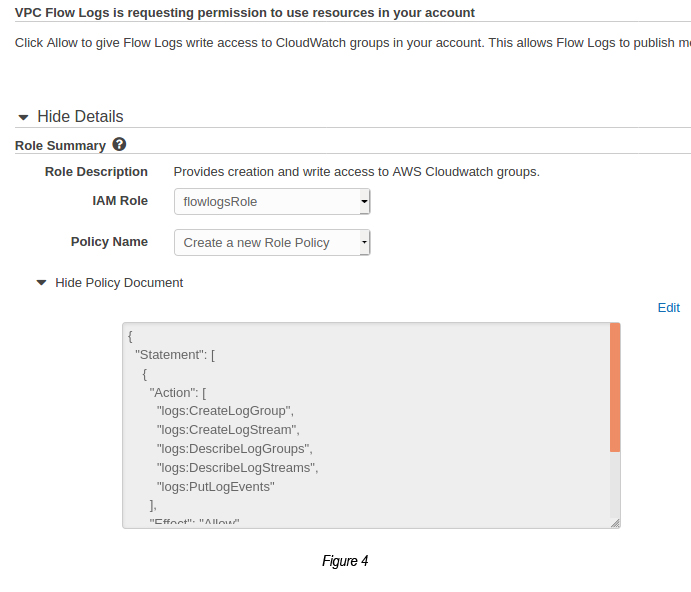

IAM Role: <It is required to set an IAM Role in order to send EC2 flow logs to CloudWatch Logs. For this, you are required to click “Setup Permissions link”, which is shown just below it. Click the “Allow” button to set permissions to the role created.>

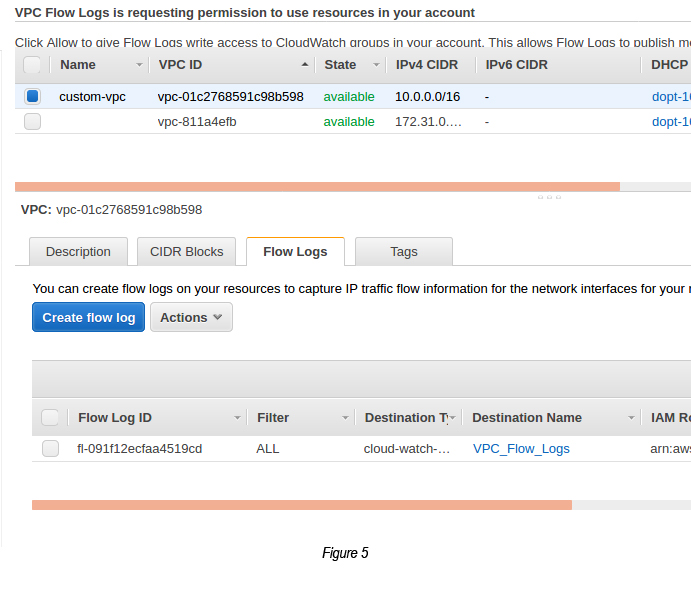

Now in the IAM Role dropdown search for the Role that you just created and click the Create button to confirm the creation of the flow log. This flow log configuration will send all the logs which run through the Custom VPC and store them in the CloudWatch Log Group that you’ve created (See Figure 05).

Step 03: Analyze CloudWatch Logs

Once the VPC Flow Log is created, you can see how the CloudWatch Logs are getting the logs, whilst the VPC interacts with the IP traffic that is interfaced.

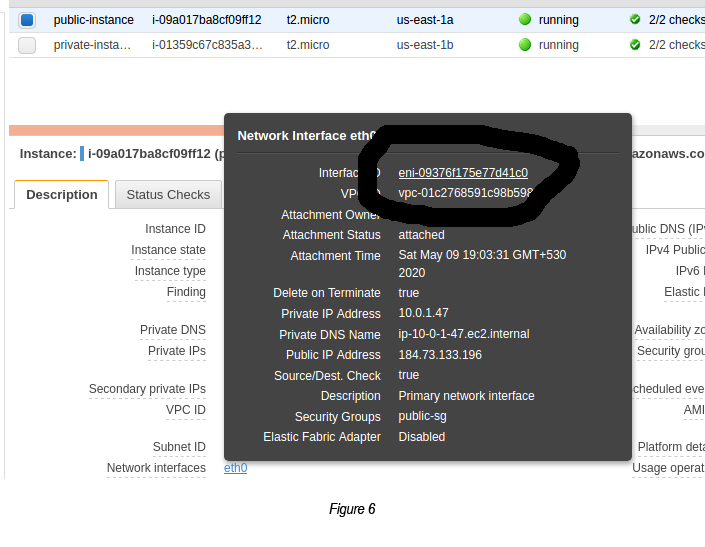

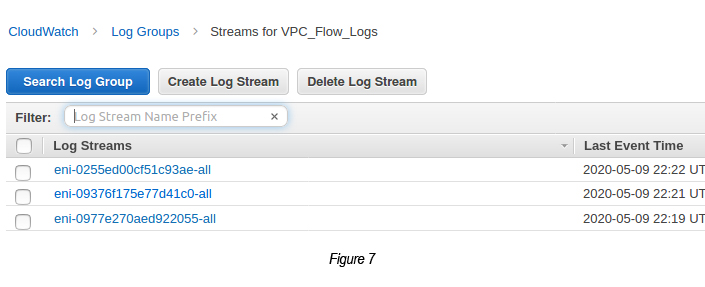

Go to CloudWatch → Log Groups → Select the ENI of the targeted EC2 instance.

(P.Note: You can see the EC2 instances’ ENI by clicking the eth0 link shown in the EC2 instance description.)

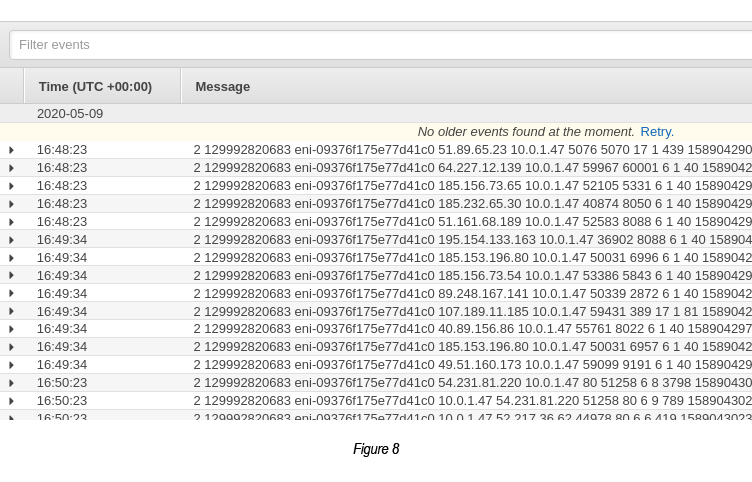

Select the ENI-related log stream. You will see something similar to the following:

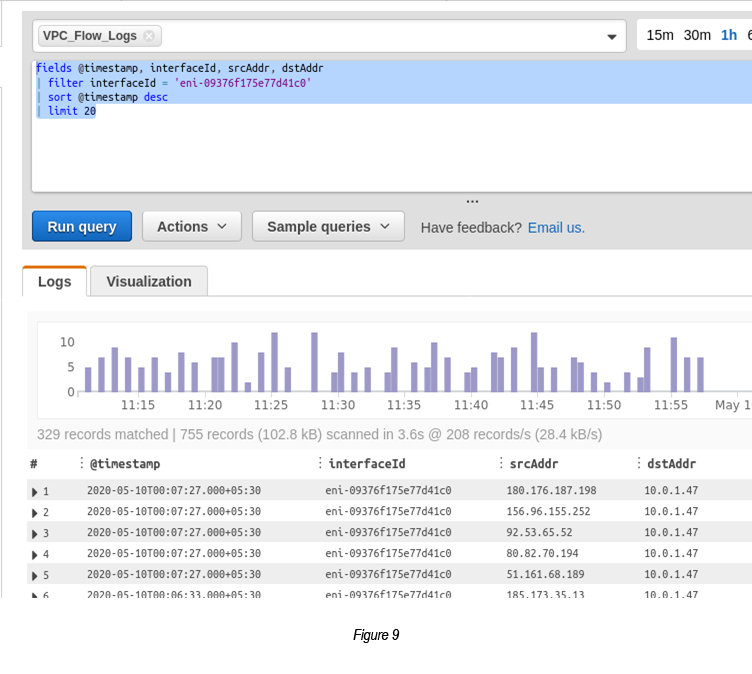

Step 04: Query CloudWatch Logs via CloudWatch Insights

Go to CloudWatch → Select Logs → Select Insights

Select the CloudWatch Log Group from the top dropdown that you want to query.

Execute the following query in the query box:

fields @timestamp, interfaceId, srcAddr, dstAddr

| filter interfaceId = ‘eni-09376f175e77d41c0’

| sort @timestamp desc

| limit 20

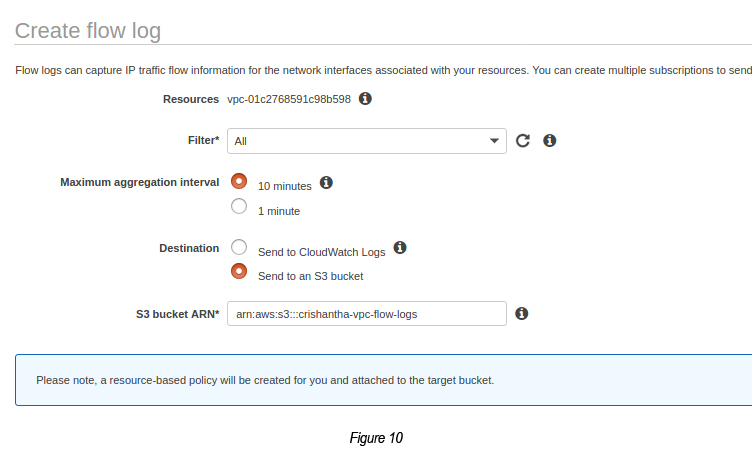

Step 05: Create a VPC Flow Log (Destination = S3 Bucket)

Create a S3 bucket (crishantha-vpc-flow-logs).

Copy the S3 bucket ARN using the copy ARN button (arn:aws:s3:::crishantha-vpc-flow-logs).

Go to VPC → Select the Custom VPC → Click Flow Logs tab → Click Create Flow Log

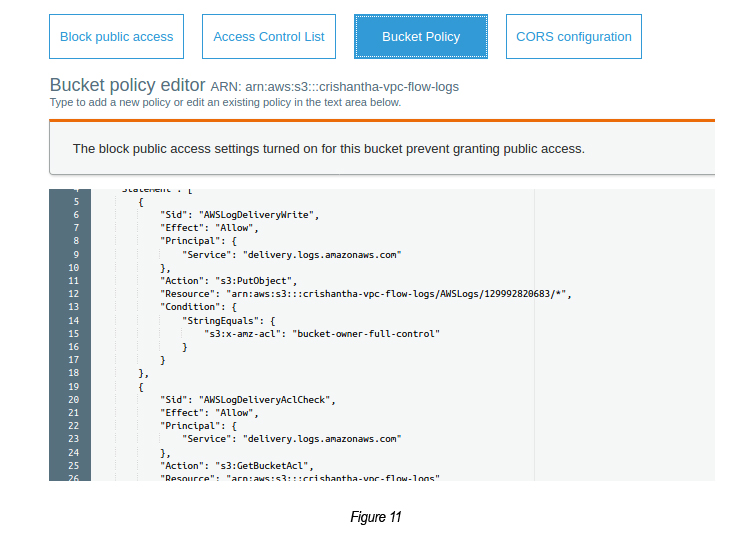

The above will create a VPC Flow Log pointing to the S3 bucket output. Once the VPC Flow log is created, the respective S3 bucket is created with the bucket policy attached to it.

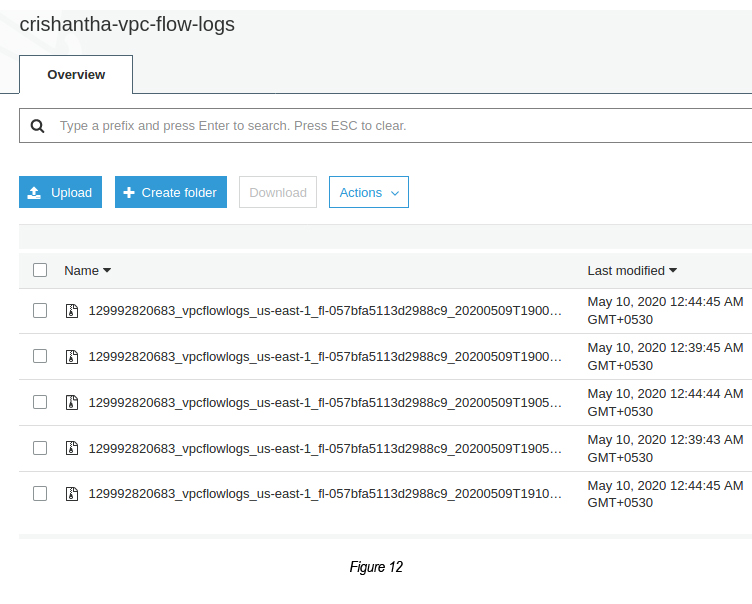

Now, you can check the S3 bucket for any logs.

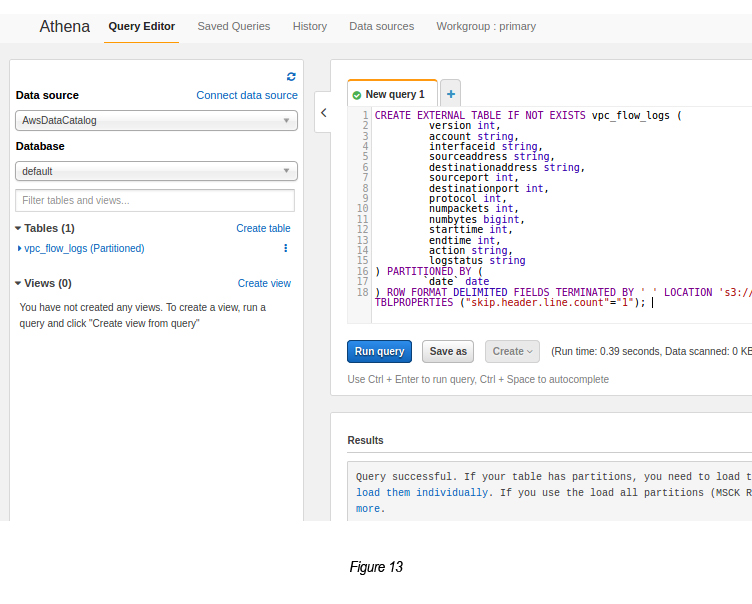

Step 06: Run Query via Athena

Go to Athena.

Select the database as “default”.

Enter the query to run on the “New Query 1” text box.

P.Note: The following query was extracted from AWS Documentation [2]. You may change the bucket name, subscriber id, region-id in the S3 bucket location details.

CREATE EXTERNAL TABLE IF NOT EXISTS vpc_flow_logs (

version int,

account string,

interfaceid string,

sourceaddress string,

destinationaddress string,

sourceport int,

destinationport int,

protocol int,

numpackets int,

numbytes bigint,

starttime int,

endtime int,

action string,

logstatus string

) PARTITIONED BY (`date` date)

ROW FORMAT DELIMITED FIELDS TERMINATED BY ‘ ‘ LOCATION ‘s3://crishantha-vpc-flow-logs/AWSLogs/129992820683/vpcflowlogs/us-east-1/’ TBLPROPERTIES (“skip.header.line.count”=”1");

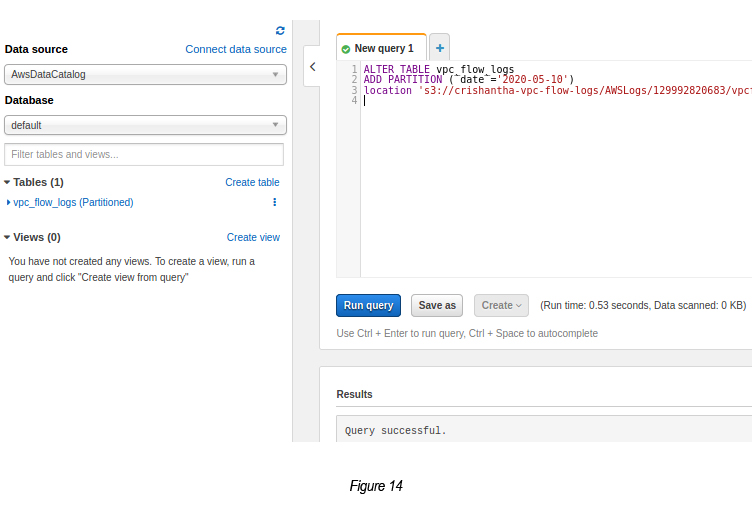

Now create a partition to read the data based on a condition.

ALTER TABLE vpc_flow_logs

ADD PARTITION (`date`=’2020–05–10')

location ‘s3://crishantha-vpc-flow-logs/AWSLogs/129992820683/vpcflowlogs/us-east-1/2020/05/09’;

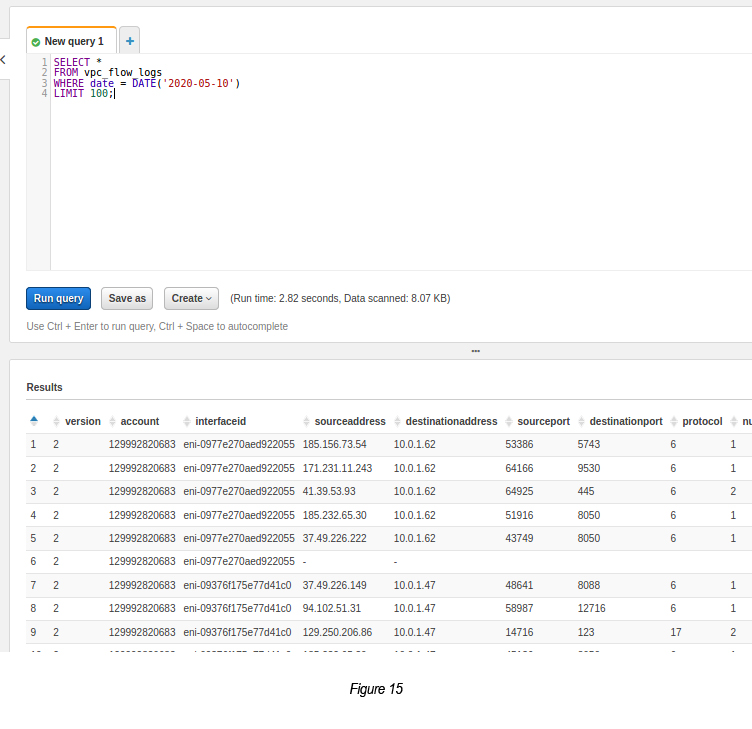

Once the partition is created, you can run a query based on the partition.

References

- CloudWatch Insight VPC Flow Log Sample Queries : https://docs.aws.amazon.com/AmazonCloudWatch/latest/logs/CWL_QuerySyntax-examples.html

- Athena VPC Flow Log Examples: https://docs.aws.amazon.com/athena/latest/ug/vpc-flow-logs.html

Crishantha Nanayakkara

Vice President - TechnologyDeveloping a Custom Audit Trail and a Notification Service for a Workflow Based Serverless Application on AWS

READ ARTICLE

Human Emotions Recognition through Facial Expressions and Sentiment Analysis for Emotionally Aware Deep Learning Models

READ ARTICLE